Token Economy Is Also Architecture

Token Economy Is Also Architecture

When people talk about AI-assisted development, token usage is usually treated as a pricing issue.

That makes sense, of course. If you are using paid tools, sooner or later cost becomes part of the conversation. But after spending more time building with AI across different tools, workflows, and project styles, I started to see something else more clearly:

token economy is not only about money. It is also about structure.

It affects how clearly you think, how much noise you introduce into the process, how reliable the outputs become, and how long you can sustain coherence while building something real.

At some point, I stopped seeing tokens as just a consumption metric and started seeing them as a kind of working space. A limited cognitive room shared between me, the model, the instructions, the code, and the task at hand.

And just like in software architecture, when that space is badly organized, everything starts getting harder.

The Wrong Way to Think About Tokens

A common mistake is to reduce the whole discussion to one simple question: “How do I spend fewer tokens?”

That question is not wrong, but I think it is too small.

The deeper question is closer to this:

How do I preserve clarity and execution quality while working with limited context?

Because in practice, the real problem is not always high usage by itself. Sometimes the bigger problem is that the context becomes bloated, repetitive, and unfocused. The model starts spending effort understanding the room instead of helping solve the problem.

It is a bit like inviting a very capable engineer into a workshop where every table is covered with tools, papers, unfinished sketches, and half-relevant notes. The engineer may still help, but a lot of energy will be wasted just figuring out what actually matters.

That is why I think token economy should be treated less like budgeting and more like design.

More Context Is Not Always Better

This was one of the most important lessons for me.

At first, it is tempting to believe that more context always means better results. So you keep adding more documents, more explanations, more background, more previous decisions, more examples, more constraints.

Sometimes that helps. But beyond a certain point, it starts doing the opposite.

Too much context creates friction. It introduces ambiguity. It makes instructions compete with each other. It mixes what is essential with what is merely historical. And once that happens, the model may still produce something useful, but with less precision and less consistency.

In other words, context has diminishing returns.

There is a threshold after which adding more no longer improves understanding. It only increases cognitive load.

That is why good AI-assisted development is not just about feeding the model more information. It is about deciding what deserves to be present in the current moment.

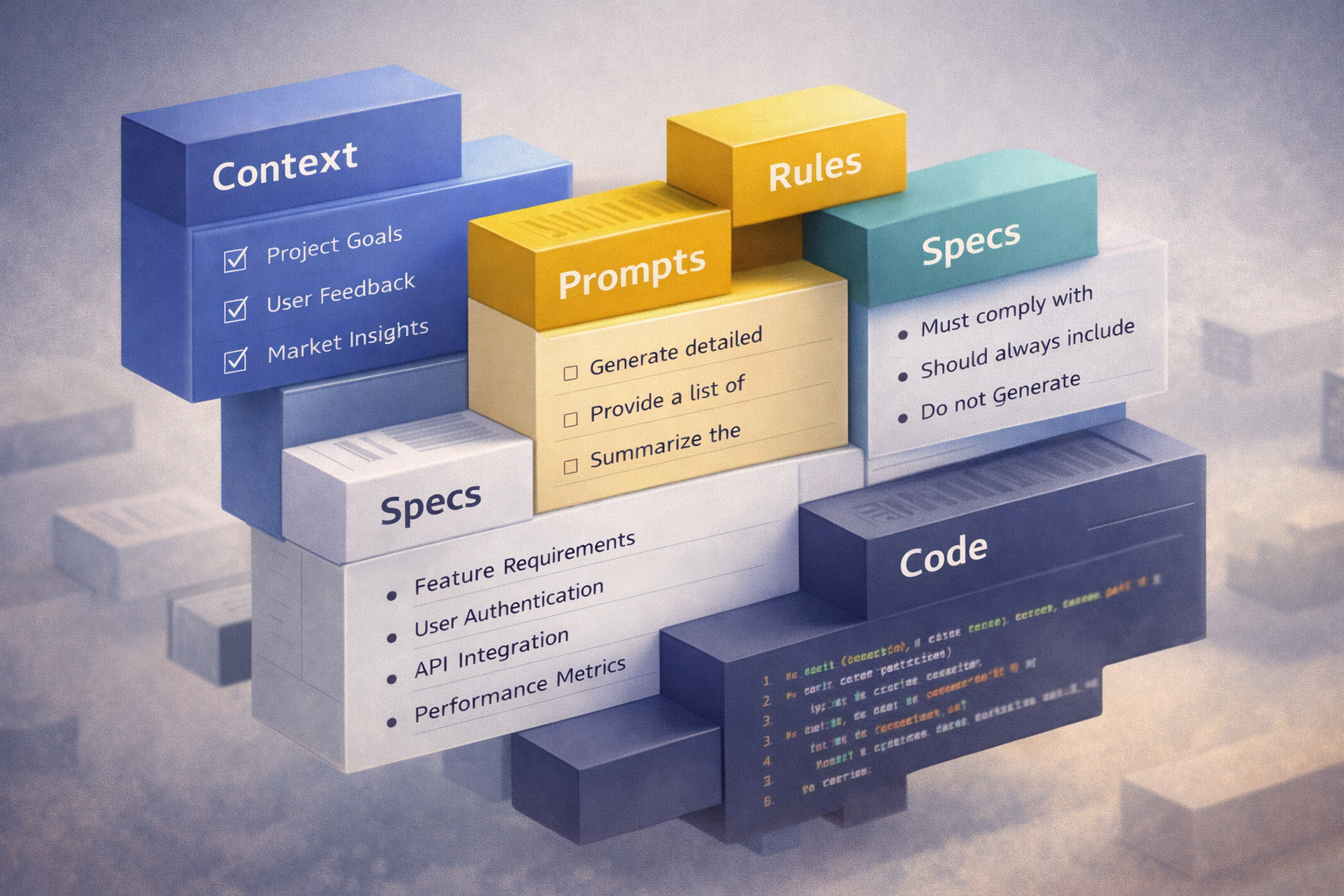

Bad Context Behaves Like Bad Architecture

The more I worked with AI tools, the more this started to resemble familiar engineering problems.

A bloated prompt behaves a lot like a bloated codebase.

A tangled specification behaves like a module with too many responsibilities.

Repeated instructions across prompts behave like duplicated business rules.

Mixed frontend, backend, mobile, and infra concerns inside one giant conversation feel a lot like poor separation of concerns.

Once I started seeing that parallel, something clicked for me: a lot of what we call “saving tokens” is really just applying engineering discipline to context.

That means the solution is often not clever prompting in the narrow sense. The solution is better organization.

Small, Stable Context Beats Large, Impressive Context

One practical shift that helped me a lot was learning to prefer small, stable, reusable context over giant, impressive instructions.

A huge context can look sophisticated. It can feel complete. It can even make you feel safe, because everything is “there.”

But in many cases, what actually performs better is a much smaller set of materials:

- a short project overview

- a document describing the current phase

- one focused feature spec

- one task description

- one clear expected output

That is often enough.

The point is not to starve the model of information. The point is to avoid drowning it in information that does not belong to the current step.

I think of it almost like packing for a trip. Bringing everything you own does not make the trip easier. It just makes movement harder.

Specification Design Affects Token Efficiency

One thing I learned in practice is that token economy starts long before the prompt itself.

It starts in how you write specifications.

If your specs are too long, too repetitive, too narrative, too broad, or too mixed, you are already creating waste before any implementation begins.

What tends to work better is writing specifications in layers.

For example:

- one document for overall project direction

- one document for the current phase

- one document for a specific feature

- one task file for the exact change being implemented now

This lets you load only what is relevant at each step.

Instead of reopening the whole project every time, you only reopen the part of the house where work is happening.

That saves tokens, yes. But more importantly, it preserves precision.

Repetition Is One of the Quietest Sources of Waste

Another thing that became obvious over time is how much token waste comes from repetition.

Sometimes we repeat the same project rules over and over again:

- code style

- architecture preferences

- naming conventions

- testing expectations

- commit format

- language choices

- documentation style

At first, that feels harmless. It even feels safe. But repeated enough times, it becomes a tax on every interaction.

If a rule is stable, it should not need to be rewritten every single time.

Whenever possible, stable instructions should move into a better home:

- workflow rules

- skills

- system-level guidance

- reusable context files

- short standards documents

The less you have to restate by hand, the more room you have for the actual work.

Separation of Context Is a Technical Skill

This is one of the most practical habits I have been trying to develop.

Not everything belongs in the same conversational space.

If I am working on packaging for Linux, I do not want the model spending attention on frontend interactions.

If I am fixing a backend domain rule, I do not want mobile concerns diluting the response.

If I am refining copy or UX language, I do not want infrastructure decisions sitting in the same frame unless they are directly relevant.

Separating context by area is not just about being tidy. It is about reducing unnecessary collisions.

In traditional engineering, we separate concerns because it helps maintainability and correctness. In AI-assisted development, that same principle also helps preserve context quality.

Summaries Are Compression for Thought

One habit that I think deserves more attention is summarization.

Long sessions accumulate a lot of useful material, but they also accumulate a lot of residue: false starts, discarded ideas, repeated clarifications, and outdated assumptions.

If you keep carrying all of that forward, the conversation becomes heavier and less efficient.

A good operational summary acts almost like compression.

It lets you preserve the important parts:

- what was decided

- what was implemented

- what remains open

- what constraints still apply

And it lets you leave behind what no longer matters.

In practice, this often becomes a better continuation point than the full conversation itself.

Not because the original exchange had no value, but because the value has already been extracted.

Not Every Step Deserves the Same Tool

Another overlooked part of token economy is tool selection.

Some tools are better for thinking through structure. Others are better for writing code. Others are better for reviewing, summarizing, or generating documentation.

If you use the same tool for every kind of task, there is a good chance you are paying unnecessary overhead, either in verbosity, in rework, or in poor fit.

Sometimes the best way to save tokens is not to optimize the prompt, but to choose a better executor for the job.

A planning-heavy task may benefit from one kind of model or workflow. A precise bug fix may benefit from another. A local CLI may be better than a large orchestration layer for a narrow implementation step.

The question is not only “which model is smartest?” It is also “which tool creates the least waste for this kind of work?”

Orchestration Also Has a Cost

I like orchestration. I think it has real value.

But I also think people sometimes underestimate its cost.

An orchestrator can coordinate flows, route context, manage steps, and improve consistency. That is useful. But it is still another layer in the loop. Another layer interpreting, rewriting, selecting, and sometimes expanding the work.

That means orchestration should be used where it creates real leverage, not just because it feels sophisticated.

Sometimes a direct interaction with a focused tool is more efficient than sending everything through an intelligent middle layer.

Again, this is not just about cheaper usage. It is about cleaner execution.

A simple path with fewer handoffs often preserves more intent.

Good Engineering Habits Reduce Token Waste Naturally

What I find most interesting is that many of the best ways to improve token economy are not exotic at all.

They are just good engineering habits:

- break work into phases

- keep specifications modular

- separate concerns

- commit frequently

- isolate changes

- record stable decisions

- avoid unnecessary rewrites

- summarize before continuing

- choose tools deliberately

None of this was invented by AI.

What changed is that AI made the cost of bad organization more visible. In a normal codebase, messy structure hurts over time. In AI-assisted development, messy structure often hurts immediately.

That is why the discipline matters even more.

Token Economy Is Also About Mental Energy

There is another side to this that I think is worth saying clearly: poor token usage does not only waste machine resources. It also wastes human attention.

When the context is too mixed, too large, or too repetitive, the developer gets tired too.

You review more noise. You restate more things. You correct more misunderstandings. You lose the sense of momentum. And instead of feeling assisted, you feel like you are constantly dragging the interaction back toward clarity.

That is why I do not see token economy as something merely technical.

It is also about protecting flow.

It is about making it easier to think, easier to review, and easier to keep moving without turning every task into a context-management problem.

Architecture Is Not Only in the Codebase

Maybe that is the main point I wanted to capture here.

When we work with AI, architecture does not live only in folders, modules, interfaces, and infrastructure. It also shows up in how we structure context, how we sequence work, how we separate decisions, and how we preserve clarity across iterations.

In that sense, token economy is not a side concern.

It is part of how the work is shaped.

The moment you start treating context as a real resource — not infinite, not free, not neutral — you begin to notice that many AI development problems are actually architecture problems in disguise.

Wrapping Up

I still think there is a lot to learn here.

AI-assisted development is evolving quickly, and the tools keep changing. But at least for me, one thing has become clear: token economy is not just about spending less. It is about building better conditions for good execution.

It is about reducing noise, protecting intent, and keeping the working space usable.

So yes, tokens matter because they cost money.

But they also matter because they shape the quality of the conversation, the precision of the implementation, and the sustainability of the workflow.

And once you start seeing that, it becomes hard not to conclude:

token economy is also architecture.